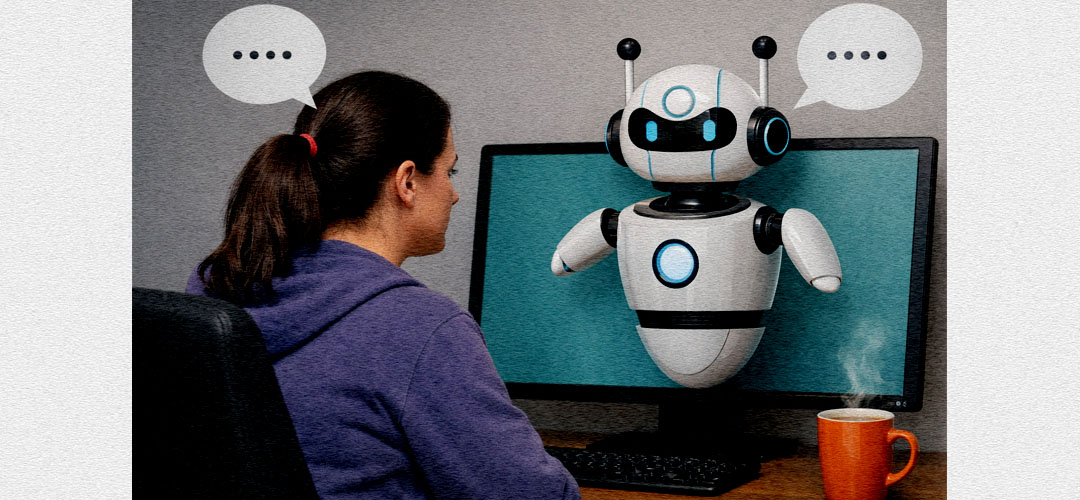

For decades, mental health support has remained frustratingly out of reach for millions. Whether blocked by cost, geography, endless waitlists, or the weight of stigma, countless people have struggled alone simply because accessing a therapist felt impossible. Now, artificial intelligence is stepping into this gap, offering mental health support at the tap of a screen which is available 24/7, often free, and without the need to look another human in the eye. But as we welcome this accessibility, we must reckon with a fundamental truth: AI can open the door to mental health support, but it cannot replace the trained therapist waiting on the other side.

AI mental health tools have shattered traditional barriers with remarkable efficiency. No waitlists, no intake forms, no frantic searching for a therapist within your budget. For people in rural communities, AI provides a first touchpoint with mental health support that simply didn't exist before. The anonymity shouldn't be underestimated either. For many, particularly in cultures where mental health carries stigma, typing concerns into a chatbot feels infinitely safer than walking into a therapist's office.

AI excels at providing psychoeducation, guiding users through breathing exercises and mindfulness practices with infinite patience. It can track your mood over time, identify triggers, and serve as valuable between-session support for those already in therapy. In crisis moments when a therapist isn't available, AI can provide immediate grounding techniques and direct people toward crisis helplines. These aren't trivial contributions, they're genuinely helpful interventions.

But at this current time, AI cannot diagnose. Current AI systems lack the clinical training to distinguish between grief and depression, anxiety and trauma responses, normal stress and early psychosis. A trained therapist draws on years of education and supervised practice to make these nuances and distinctions that can mean the difference between helpful support and genuine harm. More fundamentally, currently AI lacks genuine human empathy. It simulates understanding but has never felt heartbreak, never experienced loss, never sat with trauma. The therapeutic relationship itself, that unique connection between therapist and client, is often the very thing that heals. Research shows the quality of this relationship is one of the strongest predictors of positive outcomes. You cannot code that into an algorithm.

At the moment, AI cannot pick up on what you're not saying. The slight shift in tone, the way you change subjects, the incongruence between words and affect, all go unnoticed. A skilled therapist reads these subtle cues, helping you explore areas you might not realise you're avoiding. Human therapists bring clinical judgement honed through years of training, knowing when to push and when to hold back, when symptoms suggest you need medication or intensive support. They carry professional accountability, bound by ethical standards. AI has no such accountability.

Perhaps most importantly, therapists can do the deep, complex work AI cannot touch. Working through relationship patterns, childhood wounds, or entrenched beliefs requires someone who can hold the full complexity of your experience. When you're working through grief, shame, or profound transitions, you need someone who can sit with you in the uncertainty, not offering quick fixes, but genuinely accompanying you through the difficulty. A good therapist will confront your avoidance, your patterns that no longer serve you. AI, designed to be helpful and non-threatening, lacks both the judgement and relational foundation to do this effectively.

AI is a brilliant first step, a gateway that helps people recognise they need support. It's valuable for mild to moderate concerns, psychoeducation, and building emotional awareness. But when the work gets deep, when trauma needs processing, when relationship patterns keep causing pain, that's when you need a human - a trained, qualified therapist who can meet you in the complexity of your trauma and guide you toward genuine healing.

The future of mental health support isn't AI versus therapists—it's both, used appropriately. AI democratises access, but it should be seen as a bridge to human therapy, not a replacement. The ultimate goal should always be connecting people who need deeper help with qualified professionals who can provide it.

A final word of caution: If you're experiencing suicidal thoughts, severe depression, trauma, psychosis, or any serious mental health crisis, please seek help from a qualified mental health professional immediately. Contact a crisis helpline, go to an emergency room, or reach out to a mental health professional. Your life and wellbeing are too important to leave to an algorithm.

Mental health support is becoming more accessible, and that's cause for celebration. But let's not confuse accessibility with adequacy. AI opens doors, but genuine healing still requires the irreplaceable presence of another human being—trained, compassionate, and fully present with you in your struggle. Both have their place. Both have value. But they are not, and never will be, the same thing.

Add comment